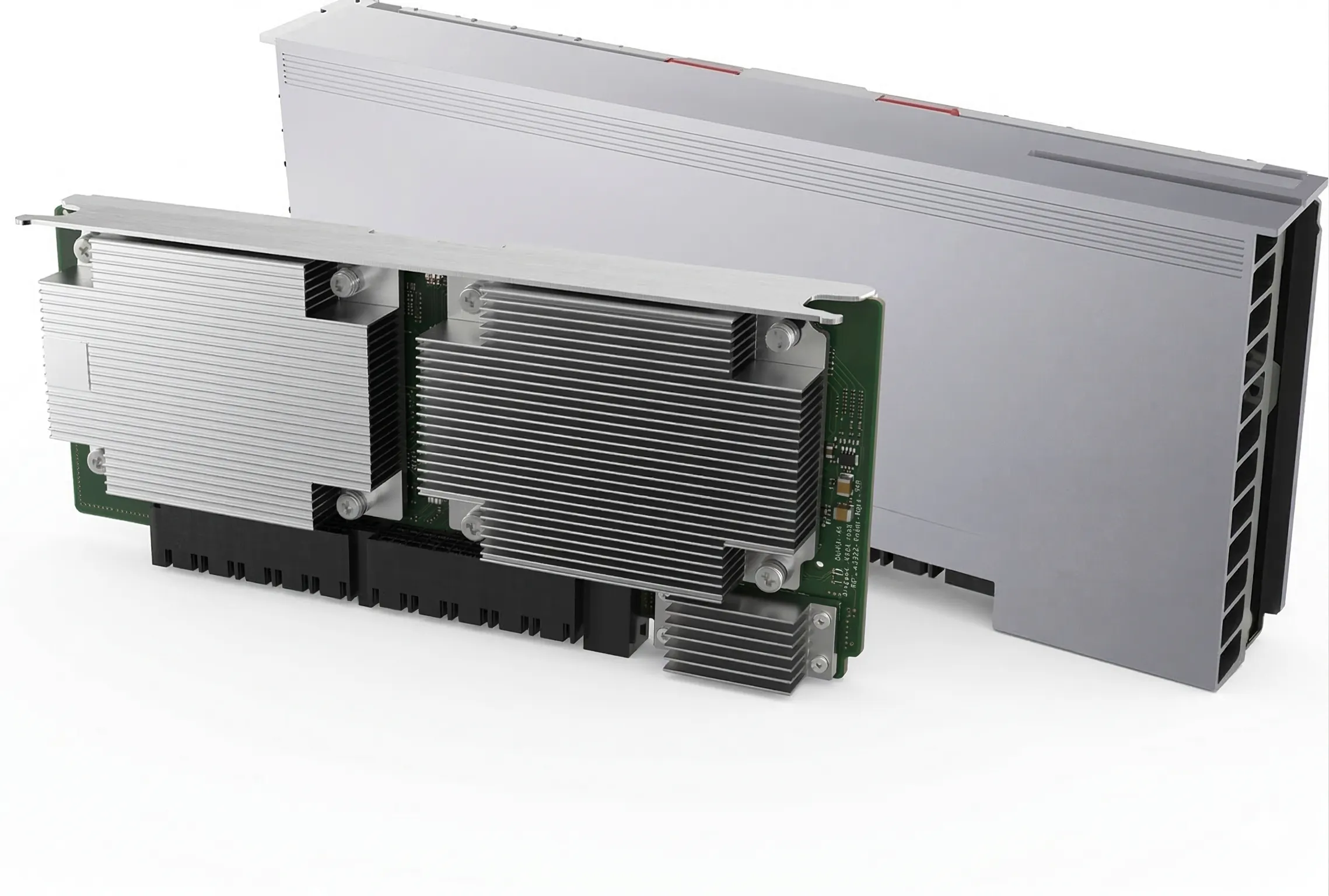

YH002 Mezzanine Module 96GB

Next-generation AI chip designed as a foundation for cloud computing and large-scale language models (LLMs). It’s not just an accelerator, but a full architectural platform focused on matrix efficiency, scalability, and flexibility for custom AI workloads. At its core is a hybrid instruction set approach: RISC-V (base) with the RVV (vector extension), enhanced by custom matrix instructions and a proprietary Virtual Instruction Set Architecture (VISA). This provides a key advantage — the ability to finely tune execution for specific models and algorithms, unlike the fixed instruction sets found in traditional GPUs. From a compute perspective, the chip follows a TPU-like architecture. It features dual systolic array matrix engines optimized for dense linear algebra operations typical in LLMs and deep learning. Complementing this is a high-performance 4D DMA engine, addressing one of the main bottlenecks in modern accelerators: data movement. As a result, the design achieves high efficiency in both computation and memory transfer. A strong emphasis is placed on optimization for large models, particularly architectures similar to DeepSeek. The chip supports Blocked FP8 precision, enabling significant reductions in memory usage and increased throughput without critical accuracy loss — especially important for both training and inference at scale. For scalability, the chip uses a proprietary ELink interconnect. Positioned as an alternative to NVIDIA NVLink, it is designed for building large-scale clusters and supports advanced features like In-Network Computing. This allows certain operations to be executed directly within the network, reducing latency and offloading compute from the chips themselves. Overall, this is a data center–class AI processor tailored for: large language models (LLMs) distributed training high-throughput inference scalable AI cluster deployments The core idea is a shift away from general-purpose GPU architectures toward deep vertical optimization for AI workloads, where not only FLOPS matter, but also efficiency in memory access, interconnect, and custom data formats."